Presto-Powered S3 Data Warehouse on Kubernetes

Presto is a distributed query engine capable of bringing SQL to a wide variety of data stores, including S3 object stores. In a previous blog post, I set up a Presto data warehouse using Docker that could query data on a FlashBlade S3 object store. This post updates and improves upon this Presto cluster, moving everything, including the Hive Metastore, to run in Kubernetes. The migration process will present several different approaches to application configuration in Kubernetes for different situations. The result is a better understanding of how to run Presto in Kubernetes and to learn more about Kubernetes configuration concepts with real examples.

Dockerfiles, scripts, and yaml files are all in my associated public github repository.

Objectives

The objectives here are two-fold.

First: running Presto in Kubernetes.

Presto continues to grow in popularity and usage, now being incorporated into more analytics offerings. It’s versatility and ability to query data in-place makes it a great candidate for a modern data warehouse that uses Kubernetes for orchestration, resilience, portability, and scaling.

I chose custom Kubernetes deployments for two reasons. First, early on, while it often makes sense to leverage pre-built Kubernetes charts and operators, but as an application becomes more strategic, it is crucial to have more fine-grained control and expertise. Second, I wanted to better understand both Presto and Kubernetes.

As part of this work to run Presto in Kubernetes, I also switched from prestodb to prestosql in order to better support partitioned Hive tables. The presence of two very similarly named projects that have nearly identical codebases is unfortunately confusing. Prestodb is the original Facebook-maintained distribution and prestosql is a public fork and community version.

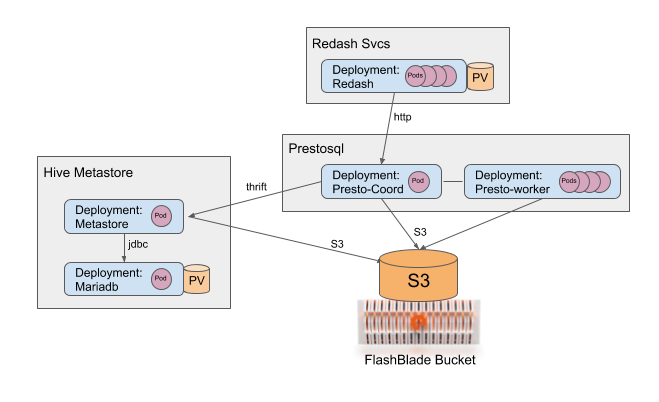

The logical architecture of my data warehouse service is illustrated below with all the services running in Kubernetes except the object store.

Second: concrete examples of different techniques available for configuring applications in Kubernetes.

My first rule configuring applications in Kubernetes is that all necessary artifacts should be in or generated by code under source control, e.g., git. The exception to this rule is data stored in secrets, like S3 access keys, that should never be stored in a source repository.

While binaries for an application should be stored in a container image, the configurations should be elsewhere for a variety of reasons to give flexibility in making changes and running multiple versions of the same application.

The various services I use for Presto leverage different techniques for different situations and reasons: docker images, configmaps, secrets, environment variables, and custom scripts. Each component’s configuration has different formats and needs and therefore nicely illustrates the advantages of each approach.

In Kubernetes, there are two categories of storage options: PersistentVolumes and userspace storage. Configuring PersistentVolumes to use shared storage requires a plugin and managing volume claims. In contrast, an S3 object store can be configured at the application level and does not require any special plugins. Presto leverages a shared object store, meaning storage access can be configured similarly to all other parts of the application. It can be as simple as specifying environment variables for an endpoint address and access/secret keys.

My following setup assumes a working Kubernetes installation and the availability of an S3 object store, e.g. a FlashBlade. I also leverage a CSI plugin for the metastore database, but local storage could also be used for non-production clusters.

Component 1: Hive Metastore

Presto relies on the Hive Metastore for metadata about the tables stored on S3. The metastore service consists of two running components: an RDBMS backing database and a stateless metastore service. A third piece is a one-time job that initializes the RDBMS with the necessary schemas and tables.

The Metastore RDBMS: MariaDB

I use MariaDB as the backing database for the Hive Metastore, running a straightforward deployment in Kubernetes. My deployment leverages PSO and a FlashArray volume to provide persistent storage for the MariaDB database.

I prefer to separate the yaml for the PersistentVolumeClaim from the MariaDB service to avoid accidentally deleting my data when I bring down the service with kubectl. By using a PersistentVolume, any or all of the services, including the Maria database, can go down temporarily (move, restart, etc) and I will not lose any of the table definitions that Hive and Presto rely upon.

A side reminder of why xml configuration files are more challenging to work with is that if I had used a Kubernetes secret for the Mariadb password, it would then be difficult (though still possible) to later directly inject that into the metastore-site.xml configuration.

Init-schemas Task

Next, init-schemas is a one-time operation to populate the Metastore’s relational database with the table definitions that Hive expects. This task needs to be run only once to initially configure the metastore database and so is a good candidate for a Kubernetes job.

The reason a job fits this particular workload is that it needs to run fully to completion exactly once, therefore not fitting into standard deployment models. An initContainer might seem like a good fit, but there would be a lifecycle mismatch. The initContainer is tied to the lifetime of a pod, whereas the init-schemas job is tied to the lifetime of the persistent storage behind the database. In other words, an initContainer runs too frequently for this use case.

This Kubernetes job is simply a wrapper around an invocation of “/opt/hive-metastore/bin/schematool” with the right arguments. An open question for the reader is how to create a dependency between the completion of the job and the start of the previous service, the Metastore. In the meantime, I run the job manually with “kubectl create” when first setting up the Metastore.

Hive Metastore

Presto communicates with a metastore service to find table definitions and partition information. For my Hive metastore, I created a script that builds a custom Docker image, uploads it to a Docker repository, and also creates the necessary configuration data in a configmap.

The Dockerfile contains instructions to install 1) Hadoop, 2) Hive standalone metastore, and 3) the mysql connector. In fact, this image is the same as I used in my prior Docker-only Presto cluster. No custom configuration files are included in this image so that the same image could be used for multiple different clusters. Instead, I will use Kubernetes to store configurations.

Configmaps store the two relevant configuration files: metastore-site.xml and core-site.xml.

The configmap updates are controlled by the following command, which is part of my Docker image update script, which uses local versions of the two files to populate the configmap:

> kubectl create configmap metastore-cfg --dry-run --from-file=metastore-site.xml --from-file=core-site.xml -o yaml | kubectl apply -f -Note that the above statement is more complicated than a simple “create configmap” because the command also supports updating existing configmaps as well.

Diving deeper into what is happening here, a key point is that the configmap is populated from local files. This approach allows me to store the build script and xml files under revision control.

All artifacts necessary to recreate a service should be either stored in source control or generated from code in source control. A good thought exercise to understand why this is valuable is to work through the steps necessary to recreate your services from scratch because of disaster recovery, migration, or environment cloning.

Use the configmap as a volume mount to translate back into files in the running pods:

volumeMounts:

- name: metastore-cfg-vol

mountPath: /opt/hive-metastore/conf/metastore-site.xml

subPath: metastore-site.xml

- name: metastore-cfg-vol

mountPath: /opt/hadoop/etc/hadoop/core-site.xml

subPath: core-site.xml

…

volumes:

- name: metastore-cfg-vol

configMap:

name: metastore-cfgFinally, the Metastore has one more configuration required: the access keys for reading and writing the S3 object store. Unlike the settings previously discussed, these values are sensitive in nature and are a better fit for Kubernetes secrets.

I created the secret for the key pair as a one-time operation:

> kubectl create secret generic my-s3-keys --from-literal=access-key=’XXXXXXX’ --from-literal=secret-key=’YYYYYYY’Refer to the “Setup FlashBlade S3” section of my previous blog post for a refresher on how to create the key pair on the FlashBlade.

The access key configuration is passed to the Metastore via environment variables:

env:

- name: AWS_ACCESS_KEY_ID

valueFrom:

secretKeyRef:

name: my-s3-keys

key: access-key

- name: AWS_SECRET_ACCESS_KEY

valueFrom:

secretKeyRef:

name: my-s3-keys

key: secret-keyThe Hive Metastore is now running in Kubernetes, possibly used by other applications like Apache Spark in addition to Presto, which we will set up next.

Component 2: Presto

The Presto service consists of nodes of two role types, coordinator and worker, in addition to UI and CLI for end-user interactions. I use two separate deployments in Kubernetes, one for each role type. Additionally, I add a third node for CLI client access to Presto.

An unexpected way that Kubernetes made deployment simpler is that I was previously forced to use Docker host networking so that the Presto process would bind to the right interface. Presto does not provide a way to bind to all interfaces with “0.0.0.0” so it was instead binding to the internal docker interface which meant it did not respond to requests from external nodes. Instead, the entire network is internalized to Kubernetes and the software binds to an interface that the other Presto workers can communicate with.

Configuring Presto services involves a mix of techniques: configmaps, secrets, and scripting.

Presto Cluster

The Presto cluster itself is a combination of two deployment types: coordinator and worker. These share the same underlying Docker image and have only small configuration differences. The Presto configuration is coupled with the Hive metastore configuration and is complex enough to warrant multiple techniques to encode configuration information.

Let’s dig into the various approaches used in my Presto yaml: Docker images, configmaps, secrets, and custom scripts. Each approach has advantages for different situations:

- The Dockerfile itself contains a few configuration files which are common to all workers and unlikely to be changed across different environments. For example, dev, QA, and production clusters all share the same image and configurations.

- Similar to the Hive Metastore service, I created a script to push configuration files into a configmap, allowing me to store all artifacts under source control and potentially have different configurations in different places. I could even manually patch the configmap if necessary.

- Kubernetes secrets for S3 access and secret keys, either injected as environment variables or combined with the script mentioned next. This approach is ideal for sensitive data, like S3 keys or passwords, because it reduces the possibility of accidental exposure.

- I created a custom entrypoint script autoconfig_and_launch.sh. This script combines template files with the hostname and access keys from environment variables to create the final config files at pod startup time. I created this script because no single configuration method described previously fit the requirement and so scripting provided more flexibility.

One example of how my autoconfig script works is that one of the configuration requirements for each node in the cluster is a unique identifier for each worker node. The script populates this field from the auto-populated environment variable $HOSTNAME:

cp /opt/presto-server/etc/node.properties.template /opt/presto-server/etc/node.properties

echo “node.id=$HOSTNAME” >> /opt/presto-server/etc/node.propertiesHere an individual configuration file, node.properties, is ultimately a combination of fixed configuration stored in the Docker image as a template and additional fields added at runtime.

Reconfiguring the Presto cluster can take advantage of Kubernetes tools. A disruptive restart of the cluster can be used to pick up software upgrades like this:

> kubectl rollout restart deployment.apps/presto-coordinator && kubectl rollout restart deployment.apps/presto-workerAnd you can easily scale the Presto cluster:

> kubectl scale --replicas=5 deployment.apps/presto-workerManaging Presto

The Presto management web interface makes it easy to monitor the health of the cluster. The monitoring interface is automatically exposed on the configured http port of the Presto service. In my case, I use port-forwarding to make the service available

> kubectl port-forward --address 0.0.0.0 service/presto 8080The screenshot below shows the Presto management UI which is useful for understanding the performance of running queries and system load.

To issue queries, I create a separate standalone pod that can run the presto-cli with a custom image. This makes it easy to use the CLI interactively from your laptop by starting a session through ‘kubectl exec’:

> kubectl exec -it pod/presto-cli /opt/presto-cli -- --server presto:8080 --catalog hive --schema defaultOr you can also issue queries directly from the command line:

> kubectl exec -it pod/presto-cli /opt/presto-cli -- --server presto:8080 --catalog hive --schema default --execute “SHOW TABLES;”In a later section, I will show how to setup Redash as a more versatile UI for end-users.

Example: Create a Table on S3

With everything setup to run Presto, we can test by creating some tables. For this, I will install the tpc-ds connector by placing a trivial config file in etc/catalog/. This connector creates the table data on demand for each of the 24 tables, so directly running queries on these tables bottlenecks quickly on the data generation process. For a faster alternative, we will create a persistent version of the tables on S3 that all subsequent queries use.

In the ‘tpcds’ catalog, there are several schemas for different scale factors. You can chose one to get an idea of all the schemas and tables with the following two queries:

presto:default> SHOW SCHEMAS FROM tpcds;

…

presto:default> SHOW TABLES FROM tpcds.sf1000;Create a single table on S3 by first creating a new schema stored in a location on your S3 bucket. Then, persist the data to S3 with a simple CTAS statement:

presto:default> CREATE SCHEMA hive.tpcds WITH (location = ‘s3a://joshuarobinson/warehouse/tpcds/’);presto:default> CREATE TABLE tpcds.store_sales AS SELECT * FROM tpcds.sf100.store_sales;

Instead of manually creating each of the 24 individual TPC-DS tables, I created a bash script to automate the process for each table:

#!/bin/bashSCALE=1000 # Scale factorsql_exec() {

kubectl exec -it pod/presto-cli /opt/presto-cli -- --server presto:8080 --catalog hive --execute “$1”

}declare TABLES=”$(sql_exec “SHOW TABLES FROM tpcds.sf1;” | sed s/\”//g)”sql_exec “CREATE SCHEMA hive.tpcds WITH (location = ‘s3a://joshuarobinson/warehouse/tpcds/’);”for tab in $TABLES; do

sql_exec “CREATE TABLE tpcds.$tab AS SELECT * FROM tpcds.sf$SCALE.$tab;”

done

When Presto writes to S3, notice that it is simpler and more efficient than the Hadoop family of applications, e.g., Hive or Spark, that use S3A which by default does an extra copy of the data on writes in order to simulate a rename operation. In contrast, Presto treats the object store as it is without trying to pretend it is actually a filesystem.

Finally, issue queries as normal against the data stored on S3:

presto:tpcds> SELECT COUNT(*) FROM store_sales;Component 3: Redash

Redash is an open-source UI for creating SQL queries and dashboards against many input data sources, including Presto. I derived my Redash deployment in Kubernetes from the official docker-compose configuration.

My Redash uses a single deployment with multiple different containers in the same pod. This simplifies some aspects of the deployment at the expense of intertwining dependencies. The advantage is that individual processes can communicate with each other via localhost, as though they are running on the same physical machine. While this approach mirrors the original docker-compose configuration, a more robust production-ready implementation should split the application into multiple pods and services.

Similar to the metastore service we setup previously, redash has a one-time job “create_db” to create the necessary relational database tables.

> kubectl exec -it pod/redash-5ddb586ff5-vsz4d -c server /app/bin/docker-entrypoint create_dbI connect to redash from the browser on my laptop by first enabling external connections with port-forwarding:

> kubectl port-forward --address 0.0.0.0 service/redash 5000Configuring the Presto connector within Redash as per the screenshot below:

The “Host” and “Port” fields correspond to the Presto service inside Kubernetes and automatically route connections to the right pods.

Once Redash is configured, you can proceed to create queries and dashboards to leverage Presto and the data stored in your S3 data hub.

For example, creating a dashboard below which monitors several statistics over time with queries that update periodically.

The above monitoring dashboard leverages data that comes from the Rapidfile toolkit to track daily filesystem metadata on a shared NFS mount with ~500M files. More on this dashboard later.

Summary

Together, the Hive Metastore, Presto, and Redash create an open source, scalable, and flexible data warehouse service built on top of an S3 data hub. The scalability and resilience of both Kubernetes and FlashBlade make this data hub strategy manageable and agile at scale.

Building this service inside of Kubernetes also demonstrates the variety of options for configuring applications, including container images, environment variables, configmaps, and secrets. Each component brings out different reasons and tradeoffs for how to effectively use Kubernetes features so you can create agile as-a-service infrastructure instead of single, monolithic applications.